Share this:

Most IT storage teams are familiar with the struggle: Important data about your storage environment is not immediately available, and in order to get it you have to spend hours on manual reporting through spreadsheets or other internal processes. Besides the wasted time, the other consequence is even more harmful to your business: errors that result in data that is inaccurate plus decisions that are not based on the facts. (Download PDF Case Study)

Manual Efforts Leading to Errors & Ambiguity

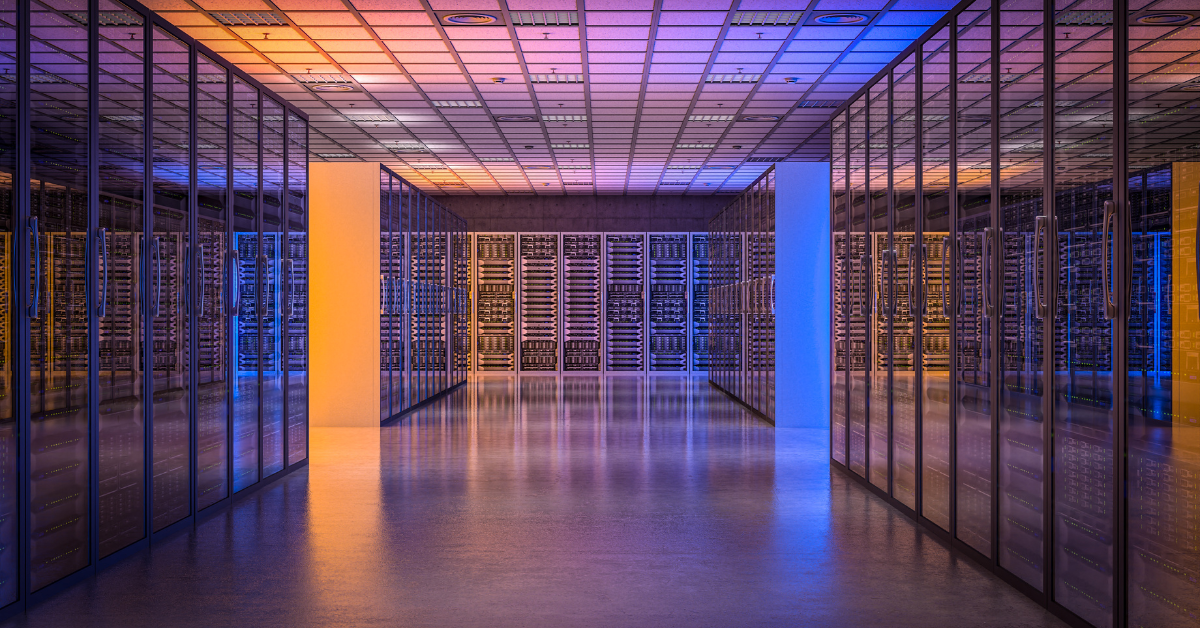

One of the nation’s leading food and beverage companies knew this problem all too well. With a mixed storage environment that included IBM and NetApp products, as well as five data centers spread across the US, their manual reporting was riddled with errors and taking too much time. Worse still, it wasn’t providing the data they needed. They needed a solution to quickly analyze and display their SAN and NAS devices spread across a complex and widely distributed network.

Relying on manual efforts was proving to be error-prone. It was also insufficient for knowing which servers used which portions of Tier 1 / Tier 2 storage, as well as where free space existed. Their Vice President of IT Infrastructure summarized their problems by saying, “Not only were our manual processes difficult for our storage administrators, but upper management did not have the information to make our business decisions.”

Automated Reporting on a Single Pane of Glass

Needing something different, the company turned to Visual One Intelligence (Visual One).

“We chose Visual One Intelligence because it leveraged our existing environment without requiring any additional tools or products to install,” said the VP of IT Infrastructure.

With Visual One Intelligence, their team received weekly reports and charts showing the exact status of their storage. Through the 24/7 client dashboard, they could also easily see which servers used which storage and where potential issues might be. With detailed data and customizable reports, hidden free space and storage usage details were always readily available.

The final verdict? “Visual One Intelligence helped us to make better decisions and save money.”

“This is the perfect tool for our environment: It has required no implementation time or effort, and we get the reports we need whenever we need them.”

– VP of IT Infrastructure, Major Food Producer